7 Performance Metrics to Track When Delivering AI-Powered Solutions

These are the metrics that separate AI projects delivering real business value from ones that only look good in a demo told by the people tracking them inside live deployments.

Most AI projects fail at measurement before they fail at anything else. Teams ship with benchmark scores and sandbox accuracy numbers, then realize six months in that they have no signal on whether the system is actually helping a user, a customer, or the business. The founders and engineers below have been through that gap and come out the other side with metrics that actually tell them something. Their answers cover task deflection rate, real-world task success, time to first share, time to decision, time to first meaningful response, instruction adherence rate, and citation share. Each one explains why that specific number earned a permanent spot on their dashboard and what it exposed that traditional metrics missed.

Deploying AI-powered solutions requires tracking the right metrics to ensure they deliver actual business value. This article outlines seven critical performance indicators that separate successful implementations from underwhelming ones, drawing on insights from experts who have built and scaled AI systems in production. These metrics go beyond vanity numbers to measure what truly matters: whether your AI solution is solving real problems for real users.

Prioritize Deflection over Benchmarks

Measure Real-World Success Rate

Cut Time to Initial Public Post

Shorten Decision Cycle for Operators

Ensure Immediate Reply Matches Intent

Maximize Instruction Adherence for Agents

Monitor Citation Prevalence on Priority Queries

Prioritize Deflection over Benchmarks

The metric most teams ignore but should track first is task deflection rate.

When we deliver an AI-powered solution, whether it is a chatbot, a workflow automation layer, or an AI integrated LMS, the first question we ask is how many tasks that previously required human intervention is the system now handling independently. That single number tells you whether the AI is actually working or just sitting on top of an existing process looking impressive.

We built a mobile app for a marketing agency where the AI layer handled over 75% of customer queries within the first month of launch. That also produced a 60% reduction in manual ticket creation. Those two numbers came directly from tracking task deflection rate from day one, not after the project closed.

The reason most AI implementations disappoint clients is that teams measure the wrong things early. They track model accuracy in testing environments but never define what operational success looks like in production. Accuracy in a sandbox means nothing if the system cannot handle real user behavior at volume.

We set deflection rate targets before we write a single line of AI integration code. That forces the entire architecture conversation to stay grounded in business outcomes rather than technical features. The metric shapes the build, not the other way around.

Raj Jagani, CEO, Tibicle LLP

Measure Real-World Success Rate

One performance metric we consistently track when delivering AI-powered solutions is real-world task success rate - the percentage of user interactions where the AI completes the intended task correctly without human intervention.

Unlike offline accuracy scores, this metric reflects how the system performs in production, under real user behavior, noisy inputs, and edge cases. Whether it's an AI chatbot resolving queries, a document parser extracting fields, or a recommendation engine guiding decisions, we measure how often the AI actually delivers the expected outcome end-to-end.

This helps us quickly identify gaps between model accuracy and practical usability, prioritize model improvements, and ensure the AI is driving measurable business value rather than just achieving high benchmark scores.

Indibus Software, AI Development Company & IT Staffing Company, indibus software

Cut Time to Initial Public Post

I'm Runbo Li, Co-Founder & CEO at Magic Hour.

The metric that matters most to us is time-to-first-share. Not render time, not model accuracy scores, not some abstract quality benchmark. How fast does someone go from opening Magic Hour to sharing a finished video with the world? That's the number we obsess over.

Here's why. Early on, we noticed something counterintuitive. Users who spent more time on the platform actually churned faster. They were tinkering, tweaking, second-guessing. The users who stuck around, who became paying customers, who told their friends, were the ones who got a video they were proud of in under five minutes and immediately posted it. Speed to output wasn't just a UX preference. It was the single strongest predictor of retention and word-of-mouth growth.

We had a small business owner, a bakery in Texas, who told us she used to spend an entire Saturday afternoon trying to make one Instagram Reel. With Magic Hour, she made three videos in 20 minutes on a Tuesday morning. She shared all three that week. Her engagement tripled. She upgraded to a paid plan within days. That story repeats itself thousands of times across our user base.

So when we evaluate any new template, any model upgrade, any UI change, the first question is always: does this get someone to a shareable result faster or slower? If it's slower, it doesn't ship. I don't care how technically impressive it is. A feature that adds friction is a feature that kills growth.

A lot of AI companies get seduced by benchmarks that only engineers care about. FID scores, inference latency in milliseconds, CLIP similarity. Those matter in the lab. They don't matter if someone opens your product, gets confused, and closes the tab. The only metric that proves your AI is actually delivering value is whether people use the output in the real-world, publicly, with their name attached to it.

If your users aren't sharing what they make, your product isn't good enough yet.

Runbo Li, CEO, Magic Hour AI

Shorten Decision Cycle for Operators

I track a lot of things on AI projects, but the one metric I keep coming back to is "time to decision" for the person we're helping. If we say an AI system will help an underwriter, analyst, or ops leader act faster with the same or better quality, I want to see the average time from data coming in to a final decision actually drop in real life, not just in a slide or demo. When that comes down in a meaningful way and outcomes hold or improve, I know the system is doing real work for the business.

Alok Aggarwal, CEO & Chief Data Scientist, Scry AI

Ensure Immediate Reply Matches Intent

Honestly? The metric I keep coming back to is time-to-first-meaningful-response. Not just response time in the raw sense, but whether the first thing the AI said to a customer actually addressed what they came in with.

We built a voice AI system at Dynaris.ai and for a while we were obsessing over call completion rates, transfer rates, all the obvious stuff. But we kept seeing customers drop off even when technically the call "completed." Turns out the AI was answering, just not to the actual question. It was handling the call but missing the intent.

Once we started measuring whether the first response matched what the caller was actually trying to do not just that it responded everything else started to make more sense. That metric exposed where our prompting was off, where our intent classification was failing, and honestly where we'd just made bad assumptions about how customers talk about their problems.

It's not a sexy metric. You can't pull it from a dashboard automatically you have to listen to calls and build the scoring. But it's the one that tells you whether your AI is actually helping someone or just technically not failing.

Every other number conversion, retention, CSAT tends to follow once that one is clean.

Peter Signore, CEO, Dynaris

Maximize Instruction Adherence for Agents

I track one metric above all others: Instruction Adherence Rate (IAR). Forget simple latency. Speed doesn't matter if your AI agent wanders off-script. At MyOpenClaw, we learned the hard way that even a 5% drift in how an agent follows constraints can destroy user trust overnight.

When we developed TTprompt, our prompt management tool, we shifted our focus to version-controlled testing. We found that whenever our IAR dropped below 92%, human intervention spiked by nearly 40%. That is a massive productivity killer for any SaaS. For TaoTalk, my AI-dialogue product, we relentlessly monitored the "Retry Rate." By enforcing strict schema validation and iterative prompt refinement, we slashed retries from 18% down to 4%.

AI is unpredictable by nature. My job as a founder is to make it boringly reliable. If an agent can't stick to the instructions 95% of the time, it isn't ready for an enterprise-grade production environment. We don't ship "maybe." We ship results.

"In the age of generative AI, reliability is the new speed."

RUTAO XU, Founder & COO, TAOAPEX LTD

Monitor Citation Prevalence on Priority Queries

The one metric I care about most is citation share across our target query set. If an AI-powered solution is meant to improve visibility or decision support, I want to know how often our pages are being surfaced and cited for the commercial questions that matter, not just how many clicks we got after the fact. That changed the way we measure performance, because it pushes us to build clearer, more source-worthy content instead of chasing volume for its own sake.

Callum Gracie, Founder, Otto Media

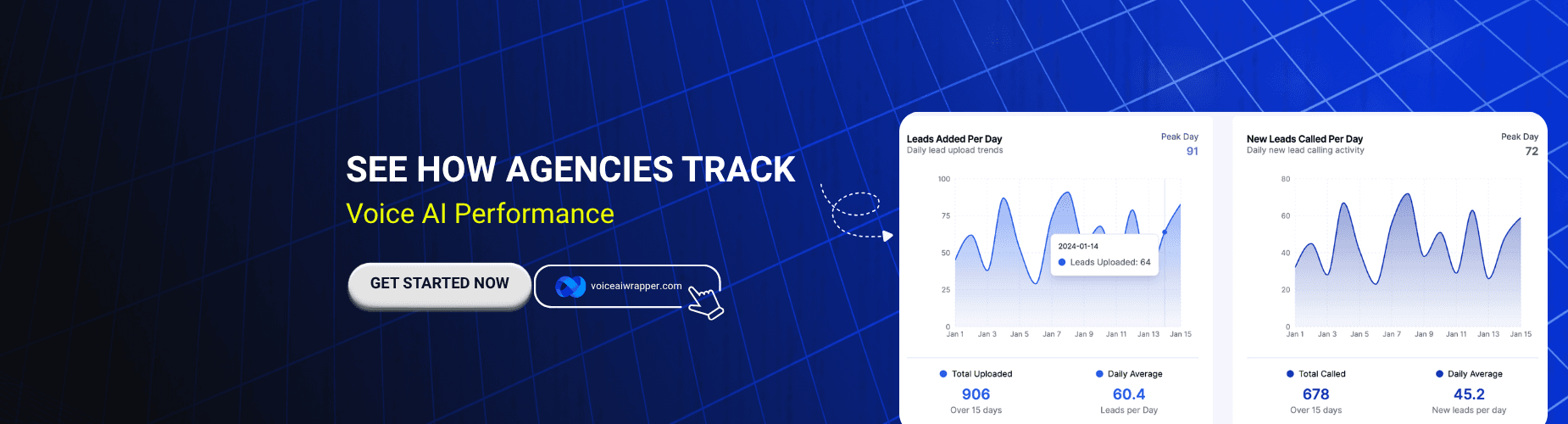

If you are building or reselling AI solutions and want your own KPIs tied directly to what your clients care about, a white label platform with real usage analytics makes that job a lot easier.

Like this article? Share it.